Jan. 2024 | Arizona State University | Class Assignment, Graduate Second Year

Group Project | Supervisor : Nicholas Pilarski, Garth Paine

Project Statement

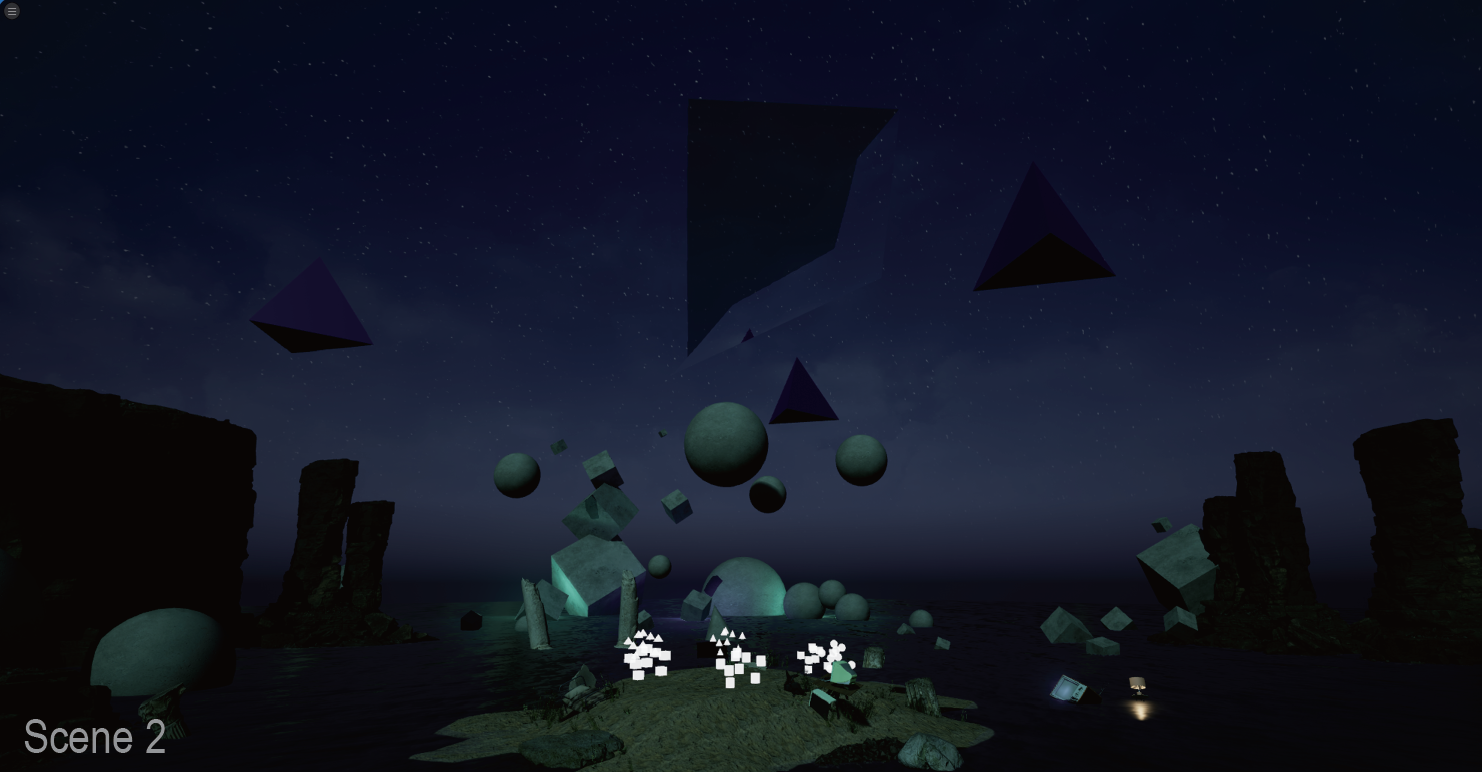

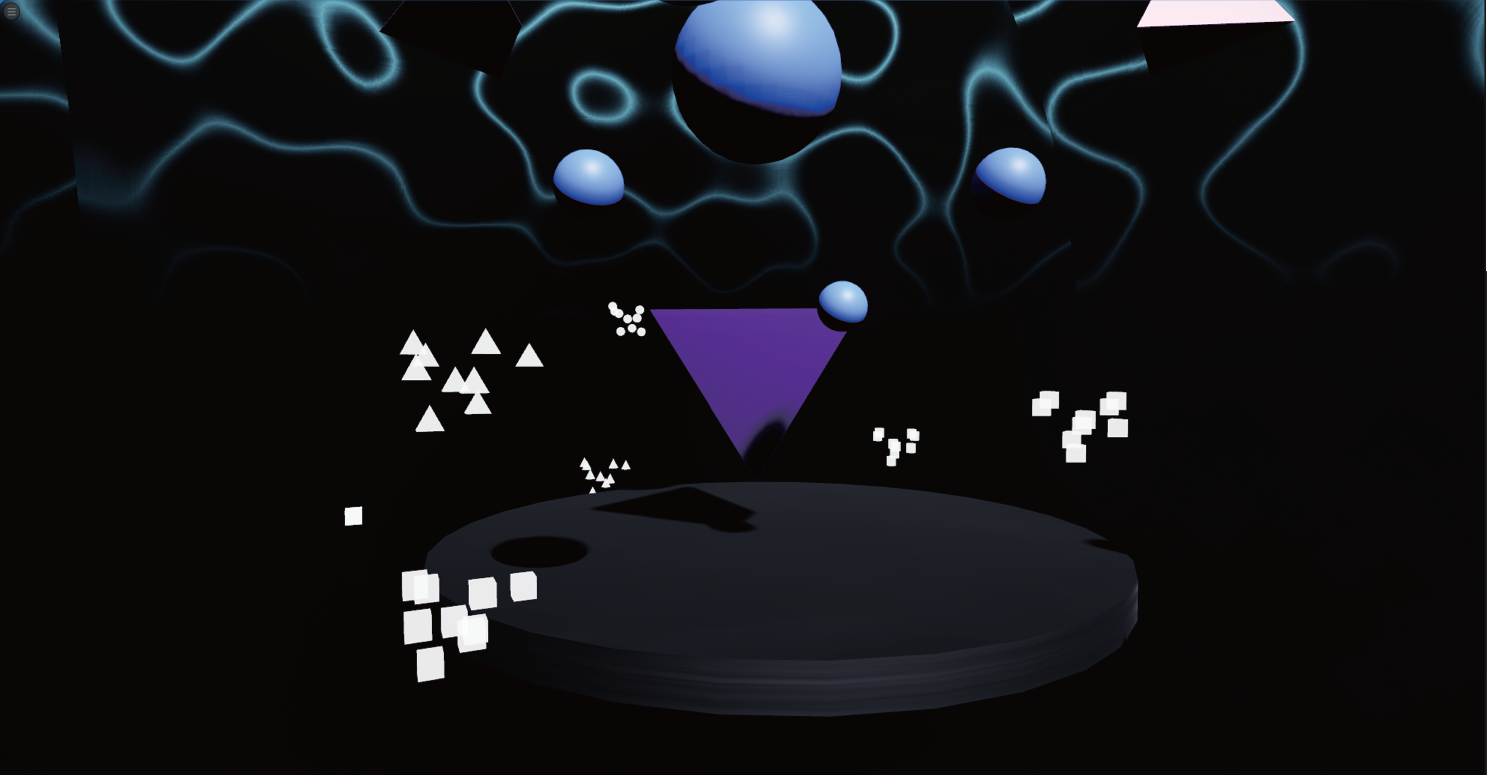

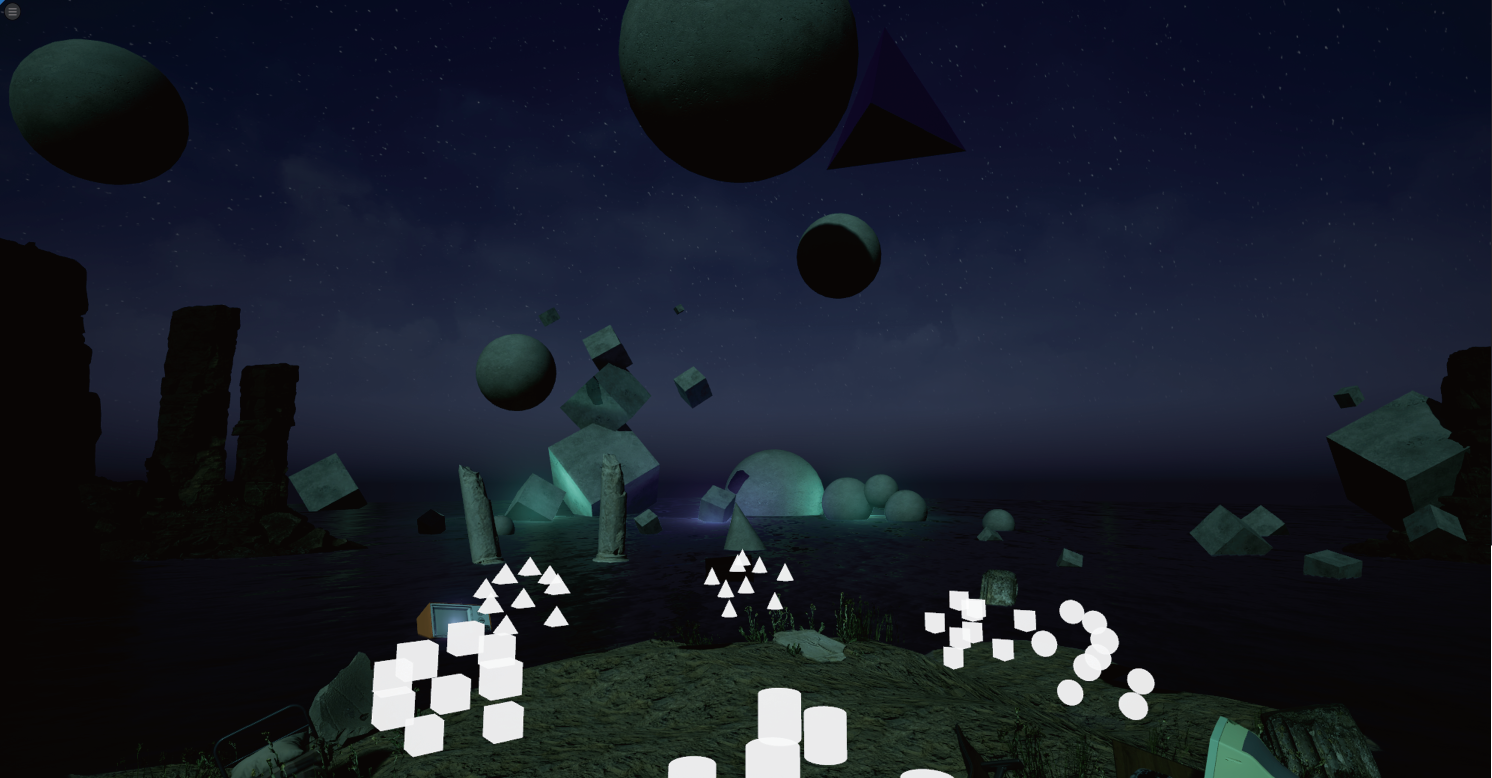

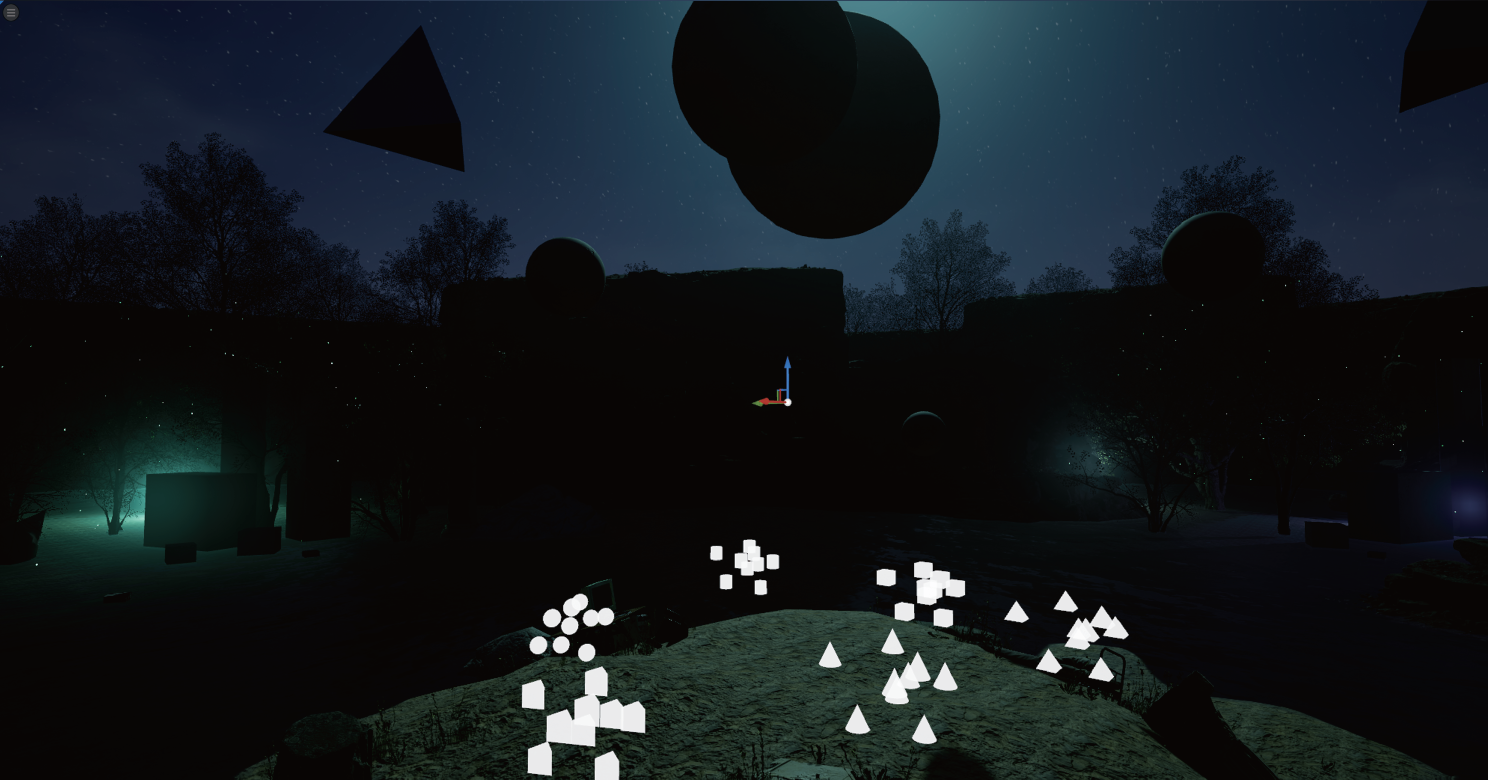

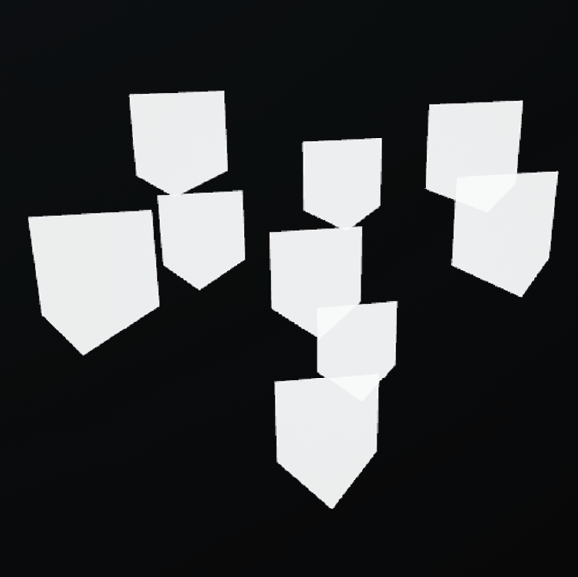

This piece addresses the idea of abstract space, both visually and sonically. During the experience, the user enters two distinct spaces; one is informed primarily by perceptual factors (lighting, color, etc.) abstracted to simple shapes and forms, and the other is informed by real-world objects and environments users have likely encountered. However, even in the second space, the organization of the real-world objects implies a space that likely does not exist in our world; a computer and phone sit isolated from any connection to an electrical or internet backbone infrastructure, surrounded by various floating objects and abstract shapes.

Many programmatic interpretations of these scenes are possible; perhaps the initial scene harkens to the primitive beginnings of 3D computer graphics, whereas the latter scene represents a flooded apocalyptic wasteland. We didn’t actively engage in these post-hoc storylines when making our environment, but it is certainly the case that technology, abstraction, and “impossible” spaces exist as common motifs that bring cohesion to this surreal environment.

The most important subject in our project is about changes of the way the audience see the same environment; we want to create the experience that makes people feel like everything is interconnected yet distinct. The most obvious change is the swap between the two scenes, which are programmed within the same world. We aim to present them as two sides of the same coin, and going into the pyramid is the event that flips this coin. There are also influences that provide the filter like effect to the audience, those geometric clouds float around in the environment serve as looking glass in our experience. Each has a sound assigned to them to indicate their characteristics, and each provides a different effect when the audience touches them (while looking through them).

Experience Walkthrough

Full Experience

Narrated Walkthrough (Narrated by Tommy McPhee)

Equipment & Software

All the environments and animations are created in Unreal Engine 5.3, and audiences will experience the content through an Oculus Quest 2 headset.

The data from the interaction will be sent from Unreal Engine to SPAT Revolution through OSC messages via Max.

All the soundtracks are managed in Reaper and then sent to SPAT Revolution for specialization. After that, the audio will be sent back to Reaper as a 50-channel soundtrack and played through a 5th order ambisonic dome.

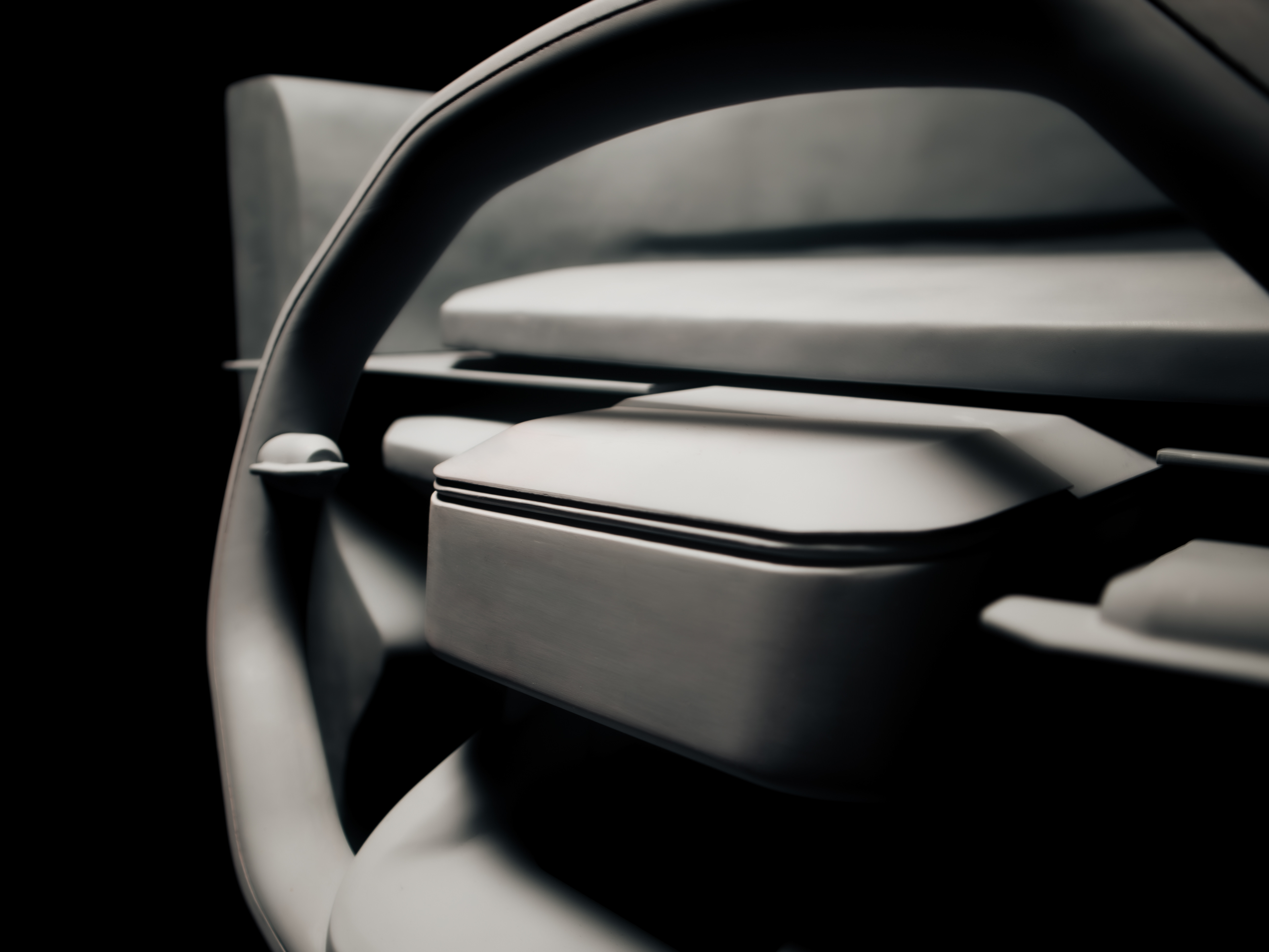

The Environment

There are two scenes in the project; the first scene is more abstract and the second scene has more real-world elements in it. Given the concept of two scenes being the same world, the two levels have the same geometric objects distributed around the playing area

Boundary

The floating platform in the first scene and the island in the second scene indicate the boundary of the dome.

Each scene has the same assets distributed around the environment.

Each scene has the same assets distributed around the environment.

Transition

The huge pyramid that floats above the player’s head is what connects the two scene. When it drops onto the player at the end of the first scene, the player will be transferred to the second scene.

The pyramid will stay in scene 2 after it finishes its job, after which the player will see it raise bock to its original location as the experience progress.

Interaction

The Clouds

The geometric clouds that floats around the player and the sounds they made are what construct our sound space and interaction throughout the whole experience, and the blueprints below are their basic functions that runs in unreal.

1. These scripts animate the movement of the clouds.

2. When the player touches each cloud in scene 2, it will affect the reverb settings and spread factor for the associated sound.

3. When the player collides with each cloud, it will play a sound randomly chosen from the six soundtracks we have. This sound will be played through the headset.

OSC Messages

1. The positions of various objects, including the clouds of shapes, phone vibration sound, and TV white noise, are repositioned in SPAT using OSC by taking their XYZ coordinates from Unreal and scaling them closer to the listener by a factor of 100. A

2. Collisions with the clouds of shapes trigger sonic changes by enabling the channel reverb in SPAT and changing the spread factor of the associated sound from 0 to 100 using OSC.

3. The position of the user in the dome is used to reposition the background sound in the first scene to the opposite location of the dome. It is also used to affect the total amount of reverb in the room; the farther the user deviates from the origin of the VR environment, the more the reverb is heard. This also causes a dramatic increase in the reverb when the pyramid drops and the scenes switch due to the user being repositioned farther from the origin.

Roles and Responsibilities

In this project, I'm responsible for:

- Planning the visual sequences and the interactions of the experience.

- Combining all the project together, making animations, adjust lighting, material and lay out of the level in Unreal Engine.

- Programming the moving actors based on our shared concept.

- Set up the OSC communications from Unreal Engine to Max.

- Provide some of the soundtrack and ideas of specialization in scene two.

Team Collaboration:

Collaborating with Tommy McPhee, he is responsible for the vast majority of the sound design, mixing, specialization, and OSC communications between Max and SPAT. He also provides several aesthetic and visual elements in our first scene.

Collaborating with Tommy McPhee, he is responsible for the vast majority of the sound design, mixing, specialization, and OSC communications between Max and SPAT. He also provides several aesthetic and visual elements in our first scene.